Other researchers responded that imposing a more stringent significance threshold would aggravate problems such as data dredging alternative propositions are thus to select and justify flexible p-value thresholds before collecting data, or to interpret p-values as continuous indices, thereby discarding thresholds and statistical significance. In 2017, a group of 72 authors proposed to enhance reproducibility by changing the p-value threshold for statistical significance from 0.05 to 0.005.

#STANDARD ALPHA VALUE FOR STATISTICAL CALCULATIONS LICENSE#

In 2016, the American Statistical Association (ASA) published a statement on p-values, saying that "the widespread use of 'statistical significance' (generally interpreted as ' p ≤ 0.05') as a license for making a claim of a scientific finding (or implied truth) leads to considerable distortion of the scientific process". The widespread abuse of statistical significance represents an important topic of research in metascience. Using Bayesian statistics can avoid confidence levels, but also requires making additional assumptions, and may not necessarily improve practice regarding statistical testing. There is nothing wrong with hypothesis testing and p-values per se as long as authors, reviewers, and action editors use them correctly." Some statisticians prefer to use alternative measures of evidence, such as likelihood ratios or Bayes factors. Other editors, commenting on this ban have noted: "Banning the reporting of p-values, as Basic and Applied Social Psychology recently did, is not going to solve the problem because it is merely treating a symptom of the problem. In social psychology, the journal Basic and Applied Social Psychology banned the use of significance testing altogether from papers it published, requiring authors to use other measures to evaluate hypotheses and impact. Some journals encouraged authors to do more detailed analysis than just a statistical significance test.

.png)

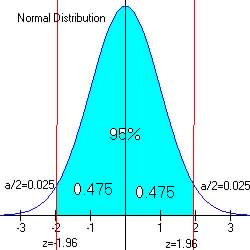

Starting in the 2010s, some journals began questioning whether significance testing, and particularly using a threshold of α=5%, was being relied on too heavily as the primary measure of validity of a hypothesis. See also: Misuse of p-values Overuse in some journals A study that is found to be statistically significant may not necessarily be practically significant. There is also a difference between statistical significance and practical significance. Researchers focusing solely on whether their results are statistically significant might report findings that are not substantive and not replicable. In other fields of scientific research such as genome-wide association studies, significance levels as low as 5 ×10 −8 are not uncommon -as the number of tests performed is extremely large. For instance, the certainty of the Higgs boson particle's existence was based on the 5 σ criterion, which corresponds to a p-value of about 1 in 3.5 million. In specific fields such as particle physics and manufacturing, statistical significance is often expressed in multiples of the standard deviation or sigma ( σ) of a normal distribution, with significance thresholds set at a much stricter level (e.g. Significance thresholds in specific fields įurther information: Standard deviation and Normal distribution If it is wrong, however, then the one-tailed test has no power. The one-tailed test is only more powerful than a two-tailed test if the specified direction of the alternative hypothesis is correct. As a result, the null hypothesis can be rejected with a less extreme result if a one-tailed test was used. 2.5%) of each rejection region for a two-tailed test.

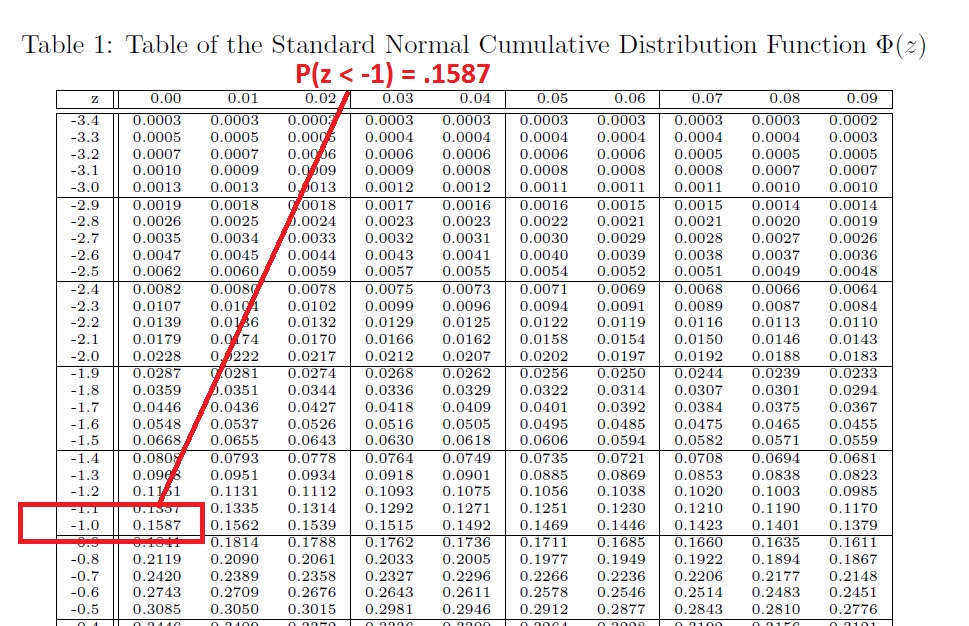

A two-tailed test may still be used but it will be less powerful than a one-tailed test, because the rejection region for a one-tailed test is concentrated on one end of the null distribution and is twice the size (5% vs. The use of a one-tailed test is dependent on whether the research question or alternative hypothesis specifies a direction such as whether a group of objects is heavier or the performance of students on an assessment is better. These 5% can be allocated to one side of the sampling distribution, as in a one-tailed test, or partitioned to both sides of the distribution, as in a two-tailed test, with each tail (or rejection region) containing 2.5% of the distribution. When drawing data from a sample, this means that the rejection region comprises 5% of the sampling distribution. More precisely, a study's defined significance level, denoted by α is set to 5%, the conditional probability of a type I error, given that the null hypothesis is true, is 5%, and a statistically significant result is one where the observed p-value is less than (or equal to) 5%. In statistical hypothesis testing, a result has statistical significance when it is very unlikely to have occurred given the null hypothesis (or simply by chance alone).